Photo: Wikimedia Commons

#99ResearcherML Theory & Optimization

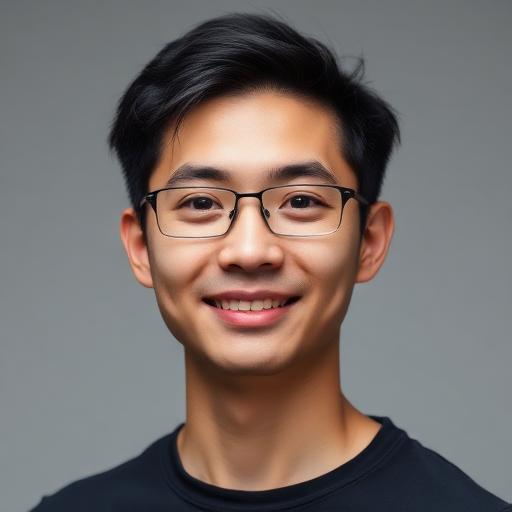

Albert Gu

CMU / Cartesia

"State-space models offer a principled alternative to attention mechanisms with linear-time complexity."

Biography

Assistant Professor at CMU. Co-founder of Cartesia. Creator of Mamba — challenging transformer dominance.

💡 My Take

Gu might be the person who breaks the Transformer's monopoly. Mamba's linear-time complexity solves the fundamental scaling limitation of attention. If state-space models prove competitive across all modalities, it's a paradigm shift. His S4 and Mamba papers are technically demanding but essential.

Key Influence

Invented state-space models (Mamba) offering a viable alternative to transformer architectures

Awards & Recognition

Creator of Mamba/S4 state-space models, CMU professor